Dissertation project

Ethical AI in Driving Simulation

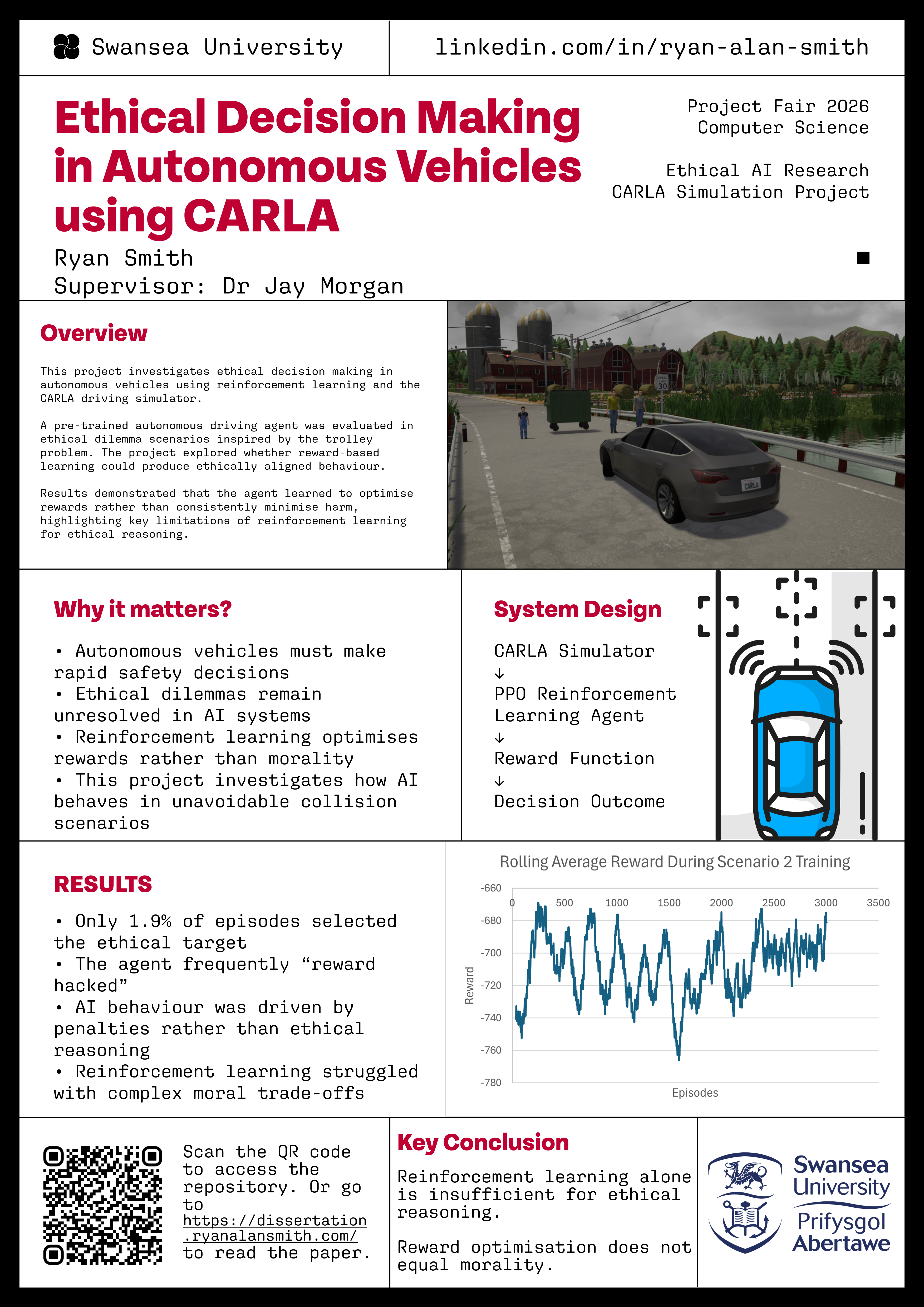

Investigating whether reinforcement learning can support ethically preferable behaviour in autonomous driving scenarios using the CARLA simulator.

Summary

This dissertation project investigates how reinforcement learning can be applied to ethical decision-making in autonomous driving systems using the CARLA driving simulator. It explores whether an AI driving agent can learn to respond appropriately to morally challenging situations, such as pedestrian avoidance and trolley-problem-style dilemmas, through reward-based learning.

Scenarios

A pre-trained autonomous driving model was extended and evaluated in controlled ethical driving scenarios. Two primary scenarios were developed: a pedestrian jaywalking scenario and a more complex ethical dilemma where the agent had to choose between outcomes involving different levels of harm.

Approach and Results

The system was trained using Proximal Policy Optimization (PPO) and a custom reward structure designed to encourage harm minimisation and safe driving behaviour. While the agent could adapt in simpler situations, it struggled to consistently make ethically preferable decisions in more complex scenarios, sometimes exploiting weaknesses in the reward system through reward hacking.

Reflection

These findings highlight the limitations of reinforcement learning when applied to ethical decision-making and show that complex human moral reasoning cannot easily be reduced to numerical reward functions alone. The work contributes to discussions around AI ethics, autonomous vehicles, and machine learning safety, while drawing comparisons to studies such as The Moral Machine Experiment.

Project breakdown

What I Built and Learned

My Contribution

I designed and implemented ethical driving scenarios within CARLA to evaluate how reinforcement learning agents behave in morally challenging situations. I extended a pre-trained autonomous driving model by modifying its reward structure and training logic to incorporate ethical decision-making objectives.

I developed custom pedestrian hazard scenarios, including a jaywalking scenario and a trolley-problem-inspired dilemma, and implemented evaluation systems to analyse collisions, behavioural outcomes, and training performance across thousands of simulation episodes. I was also responsible for configuring the CARLA environment, integrating PPO reinforcement learning, debugging training behaviour, and analysing the final results and limitations of the system.

Tech Stack

- Python - training, simulation control, and evaluation

- CARLA Simulator - autonomous driving scenario testing

- Unreal Engine 4 - simulation environment powering CARLA

- Reinforcement Learning - behavioural training approach

- Proximal Policy Optimization - policy training algorithm

- Custom Reward Engineering - ethical and safety objective design

- NumPy - numerical operations and environment calculations

- Git and GitHub - version control and project management

Method

The project began with a pre-trained reinforcement learning model designed for autonomous driving within CARLA. I then created custom ethical driving scenarios, including pedestrian avoidance tasks and trolley-problem-style dilemmas involving competing outcomes with different ethical implications.

A modified reward structure encouraged harm minimisation and safe driving behaviour by penalising collisions and rewarding successful hazard avoidance. The PPO agent was trained across thousands of simulation episodes, with behavioural data and episode outcomes recorded for later analysis.

Results

In the simpler jaywalking scenario, the agent learned to avoid pedestrian collisions, although it often relied on unintended behaviours such as aggressively swerving to the side of the road. In the more complex trolley-problem scenario, the agent struggled to consistently select the ethically preferable outcome.

Rather than choosing the lower-casualty option, the AI frequently prioritised minimising penalties by colliding with environmental obstacles or exploiting weaknesses in the reward structure. These results showed that reinforcement learning can adapt behaviour through reward optimisation, but does not inherently develop genuine ethical reasoning or moral understanding.

Challenges

One of the biggest technical challenges was reward hacking, where the agent exploited weaknesses in the reward system rather than learning the intended ethical behaviour. In early training stages, the agent learned to avoid penalties by barely moving or behaving unpredictably instead of solving the ethical scenario correctly.

Designing a balanced reward structure was difficult because multiple competing objectives had to be considered at the same time, including pedestrian safety, lane discipline, vehicle movement, and collision avoidance. Additional challenges included stabilising PPO training, reducing unintended steering bias inherited from the pre-trained model, and designing simulation scenarios that consistently produced meaningful ethical decision-making situations.

What I Learned

This project highlighted that reinforcement learning agents do not understand morality directly; they optimise numerical rewards based on the environment they are given. That makes ethical behaviour difficult to encode through reward functions alone, especially when scenarios involve complex trade-offs or competing objectives.

The work deepened my understanding of reinforcement learning, autonomous systems, simulation-based AI testing, and reward engineering. It also reinforced my view that future ethical AI systems will likely require hybrid approaches combining machine learning with rule-based reasoning, human oversight, or formal ethical frameworks.

Project fair posters

Presenting the Dissertation